The Agentic Age: Mouths vs. Hands

One of the most resonant and repeated idioms shared at the summit was simple: “Chatbots think; Agents do.”

For the past two years, we have become accustomed to using LLMs as tools to generate text. Agentic AI changes this premise. These are bots designed to complete real-world tasks. Coding front-to-back applications, scheduling complex meetings, or executing workflows with only basic direction. We are graduating from software that chats to software that acts. Chatbots as we know them act as the brain, using reasoning to plan out how to approach a prompt, and as a mouth to communicate these thoughts. Agents are the “hands” that act.

A powerful demonstration of this shift came from a Department of Education representative. They showed off an agent capable of executing complex retrieval tasks across hundreds of academic datasets. The goal was to make this disjointed data accessible and comprehensible for all, not just data scientists or programmers who understood how to make the relevant API calls. They featured an example of how the agent could autonomously generate granular comparisons of school acceptance rates, filtering for variables like in-state versus out-of-state applicants. All from a simple, plain-language query.

The Adoption Dilemma: FOMO vs. FOMU

A major theme among leadership, particularly on the government side, was the tension between FOMO (Fear of Missing Out) and FOMU (Fear of Messing Up).

There’s pressure not to be left behind as Agents grow in popularity. However, in the public sector especially, there is a necessary caution against putting reckless faith in agentic bots too early. We are still facing the challenge of differentiating “AI Slop” (low-quality, hallucinated output) from valuable, actionable data. Paradoxically, as Agents become more capable, human trust in them often dips initially because the “black box” gets bigger. We are currently in a “semi-autonomous” phase. The technology is capable, but the trust required to leave an AI completely on its own isn’t always there.

The industry consensus on how to build that trust is to start with low-risk, high-value automation.

The most successful organizations aren’t giving bots critical, life-or-death tasks and expecting perfection. They are assigning them low-stakes, highly repetitive tasks that save huge amounts of human time. This builds the track record necessary to move toward full autonomy.

Leadership is the Differentiator

Technical capability is only half the battle; culture is the other. The break-out sessions repeatedly illustrated the need for enthusiastic leadership buy-in as a pre-requisite for genuine AI success.

When leaders view AI as a partner rather than a replacement or a gimmick, adoption hurdles disappear. Additionally, stakeholders need to be involved from the start. Be upfront about what they should and shouldn’t expect an Agent to be able to handle.

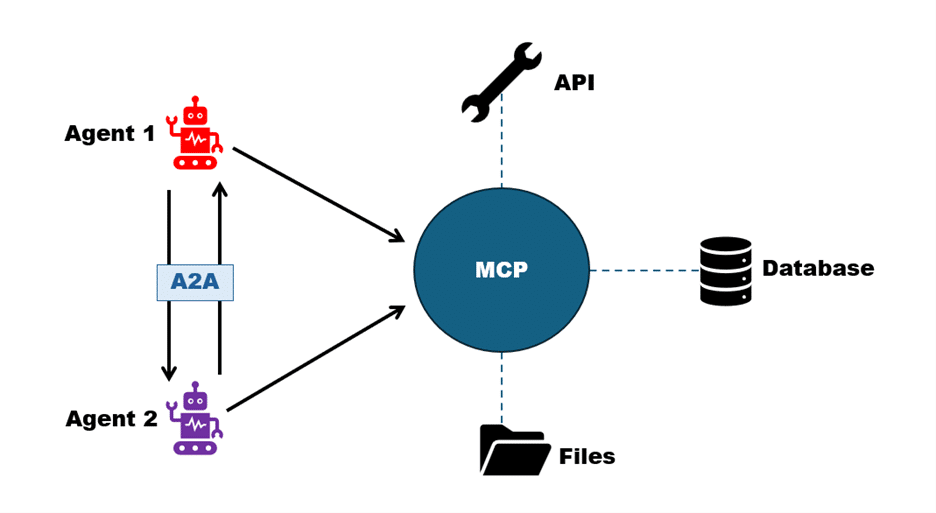

Understanding MCP and A2A

Agents don’t necessarily automatically come equipped to interact with the software tools they need to complete a task. Sticking to the earlier analogy, if an Agent is the “hand” that does the work, MCP (Model Context Protocol) is the standard handle that lets that hand pick up any tool.

- The Problem: Right now, if you want an AI to read your Google Calendar, you have to write specific code for that. To read a SQL database, you write different code. It’s like having a hand that changes shape every time it needs to hold a different tool—inefficient and messy.

- The Solution: MCP creates a “USB-C port” for AI. It standardizes the connection between AI models and data sources (like your internal documents, Slack, or databases).

- Why it matters: It softens the need to build custom bridges for every single app and allows an Agent to instantly “plug in” to your organization’s data and get to work.

Protocol Collaboration

Another protocol, being pushed heavily by Google, is A2A: Agent to Agent.

- The Problem: Today’s Agents are silo-ed. An “HR Agent” built by one vendor usually can’t talk to a “Payroll Agent” built by another. They are like two employees in the same office who speak completely different languages.

- The Solution: A2A is the common language (protocol) that allows them to communicate, hand off tasks, and coordinate.

- Why it matters: This is the key to the “Multi-Agent” future discussed at the summit. You don’t want one giant, expensive “Super Bot” that does everything. You want a team of specialized, smaller bots- like how micro-services are replacing monolithic architectures in application development. And A2A makes collaboration between them possible.

Final Thoughts

The Agentic + GovAI summit made one thing clear: The novelty phase of AI is ending, and practical utilities are already here.

As partners to the federal government, we are positioned to bridge the gap between cutting-edge capability and safe, dependable applications. Our job is to build the trust, the architecture, and the guardrails that allow these Agents to work safely and effectively.

Interested in starting a conversation about how we can operationalize an effective AI future for your unique needs? Connect with Analytica to see our solutions in action and learn how we bridge the gap between capability and compliance.

Jack MacPherson | 3/31/2026

Jack MacPherson | 3/31/2026